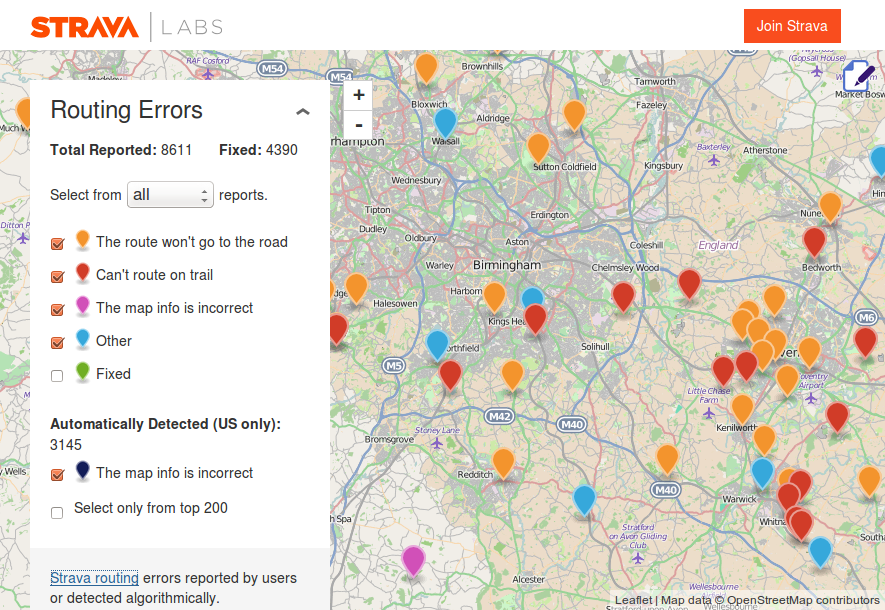

It is now becoming increasingly difficult to spot errors in OpenStreetMap just by looking at the map on openstreetmap.org. To help we have a collection of quality assurance tools, and this weekend I discovered a new one developed by Strava. The keen cyclists or runners amongst us will recognise the name – Strava is a phone app/service then enables you to monitor your athletic progress over time and compare it against others. To do this it keeps a track of your run/cycle using the GPS built in to your smartphone. Consequently Strava have built up a large database of GPS traces and user contributed map errors. From this they have built a “routing errors” website to help iron out any issues in the underlying map data, which naturally is based on OpenStreetMap.

The service highlights 2 different types of errors: those manually entered by it’s users, and those automatically detected from the map data and the recorded GPS traces (e.g. many cyclists riding the “wrong” way down what is a one-way street in OpenStreetMap suggests that the OSM data may be incorrect).

I’ve had a quick play with this new quality assurance tool (with mixed results), but I’d love to hear your views. One concern is that the manually submitted “errors” may be a problem with user behaviour or the Strava app itself, rather than an issue with the OpenStreetMap data, al la MapDust – a similar, and now quite dated service from Skobbler. But is this view too pessimistic? Is there useful data in the manually submitted errors? And how about the (US only) automatically detected errors? Are these a reliable source of quality assurance data for OpenStreetMap?

Let us know your views in the comments section below and feel free to share any other great QA tips you may have.

Hjart

A year ago or so I fixed several hundred of these and I found it obvious from many of them that the vast majority of Strava users were quite confused about the effects of staring at a Google map when the underlying routing engine was based on OSM (which they had no idea about). Despite that I found many reports highlighting issues that most likely wouldn’t have been easily found otherwise. There definitely are gems to be found in there.

Eventually I found though that Strava’s copy of OSM didn’t seem to ever get updated, which resulted in new reports cropping up on issues which I had already fixed a long time (half a year or more) ago and that’s when I lost interest in fixing these errors. Hopefully this has improved now.

James

Hjart’s experience is close to mine. For a non-OSM based site, the bugs are pretty good. In the US, I’d give it a 30% good bug rate.

The old data thing is mildly annoying. The Strava site says they update routing data quarterly, but in my experience, it is closer to annually. They just did an update. One nice thing is that you can filter out the old errors, so stale errors do disappear.

Also, the Strava routing table hasn’t allowed travel on unclassified highways until the latest update. They didn’t allow routing on tertiary_links and bridleways. I haven’t checked whether that was fixed. These omissions lead to false positives.

Beyond this, the slide tool is pretty awesome for accurately mapping trails and fixing bad Tiger geometry.

Todd

I’ve also found the data in the Route tool to be well out of date, 6 months or more. And the error that was bothering me a month ago (fixed in OSM last year) is still there. Waiting… waiting… waiting. Seems a bit amateur to have a one-off import of OSM to a live website, even if it says “beta”. And I’ve just noticed in the route tool today that the Google copyright and terms links are overlayed on the OSM view. Very naughty Strava.